A Full Automation Stack Rebuild: Make to n8n, AI Agent, Custom Dashboard

Published with client approval. Business details are anonymized at client request

Published March 9, 2026

The Starting Point

A streaming service provider came to me with three Make scenarios and a problem.

The scenarios were handling everything: lead capture, trial activation, order processing, subscription management, customer notifications. Three workflows doing the work of ten, each one a long horizontal chain where the router splits into two near-identical branches, and the heavy logic sits inside inline code execution nodes scattered across the flow.

It worked. Until it didn't.

Two things broke down at the same time. First, execution limits. At roughly 50 leads per day, before you count orders, renewals, retries, and notifications, they were burning through Make's operation counts fast. Scaling meant paying significantly more for a platform that was already showing its structural limits.

Second, they wanted to add an AI agent to handle customer support on WhatsApp and Email. The customer service team couldn't cover every hour, and missed leads due to unavailability was a real, documented revenue problem. They needed something that could take over when the team was offline, handle common questions, and hand off cleanly when a human came back.

Bolting an AI agent onto the existing Make setup wasn't the right call. The workflows were already hard to reason about. Adding agent logic, with memory, tool-calling, escalation detection, and enable/disable control, on top of three tangled scenarios would have made everything worse. This was the moment to rebuild properly.

The workflows weren't the only problem. The data model needed a full redesign, and the processes needed to be modeled properly before any implementation started.

What Was Wrong With the Data Model

The client had two tables. Everything lived in them: lead contact info, streaming account credentials, order details, trial flags, message history. All of it crammed into two flat structures.

The consequence was visible in the Make workflows. Checking whether a trial reminder had been sent meant reading a boolean flag off the lead record. The streaming account credentials (username, password, expiration date) lived on the same row as the customer's WhatsApp number. Orders had no independent existence as they were implied by the state of the lead.

I redesigned this into five tables before writing a single workflow: Leads, Accounts (streaming credentials and status), Orders (full order lifecycle), Messages (every notification sent, with channel, type, and delivery status), and Events (an audit log of everything that happened). Each table has one responsibility. Foreign keys connect them cleanly.

This matters because workflows are only as clean as the data model underneath them. When your data is tangled, your logic to read and write it becomes tangled too. The boolean flags on the lead record (isTrialSent, isTrialReminderSent, isTelegramed) weren't a quirk — they were a symptom of a schema that had no place to put this information properly.

With a Messages table, you don't need flags. You query whether a message of type trial_reminder exists for this lead. The data carries its own history.

What Was Wrong With the Make Architecture

Before getting into what I built, it's worth being precise about the structural problem. This isn't a "Make bad, n8n good" take. Make is fine for simple linear automations. The problem was that these workflows had outgrown what Make handles well.

The three scenarios: Agent Subscriptions, Orders Automation, Agent Trial. Each one followed the same pattern. One long chain, a router in the middle splitting into two branches that largely duplicated each other, and e2b.dev code execution nodes doing the actual business logic inline. There was no reuse. The customer notification logic was copied across scenarios. Adding a new notification type meant touching multiple places. Debugging a failed execution meant tracing through a flat chain with no meaningful structure.

The core issue: all concerns were mixed in the same flow. The logic for "does this lead already exist?" lived in the same scenario as "call the streaming API" and "send a WhatsApp message." Nothing was separated. Nothing was reusable.

Modeling in BPMN Before Building in n8n

Once I understood the existing system, I modeled it in BPMN before designing anything new.

BPMN is a standard process modeling language. It has precise notation for tasks, gateways, events, sub-processes, and message flows. It's not a flowchart. It forces you to be explicit about what triggers a process, what decisions are made and on what conditions, what the failure paths are, and where one process ends and another begins.

I used it in three stages. First, I modeled the existing Make workflows as BPMN diagrams. This converted the client's current processes from "three horizontal chains of nodes" into a language both he and I could read and discuss. He could see, for the first time, what his automation was actually doing, in terms of business logic rather than tool configuration.

Second, I modeled the expected new system. What should Order Delivery look like? What should happen when a trial expires and the customer doesn't renew? Where exactly does the AI agent hand off to a human? These questions have specific answers in BPMN. We reviewed the diagrams together and agreed on the behavior before I wrote a single node.

Third, I used the BPMN diagrams as the implementation blueprint. Each sub-process in the diagram became a workflow in n8n. The gateways became If nodes or Switch nodes. The message events became Notify Customer sub-workflow calls. The correspondence between the model and the implementation was direct.

The result: no surprises during delivery. The client had already validated the logic. I was building something we'd both agreed on in writing, in a language designed for exactly this purpose.

The Architecture I Built

I decomposed the system into workflows with clear, single responsibilities. Here's what the full n8n setup looks like:

Core operational workflows:

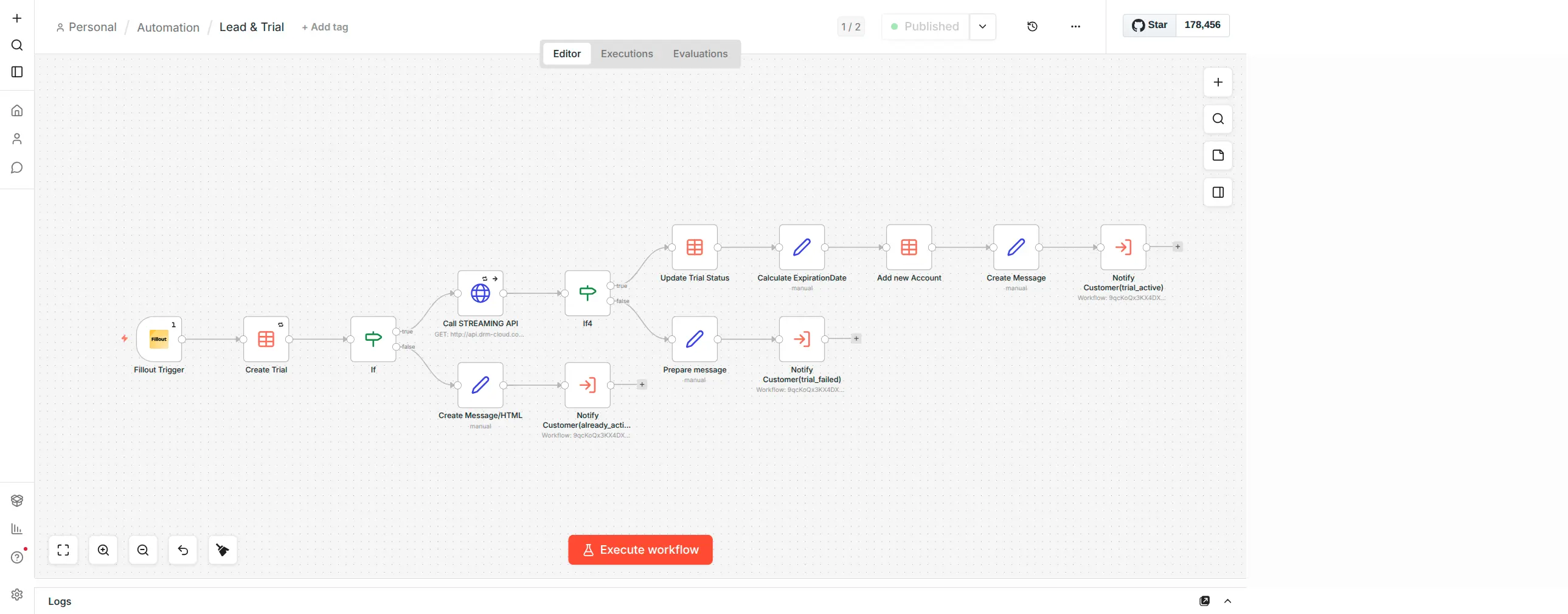

- Lead & Trial: Fillout form triggers lead creation, calls the streaming API to provision a trial account, branches on whether the lead already exists, notifies the customer of trial activation or failure

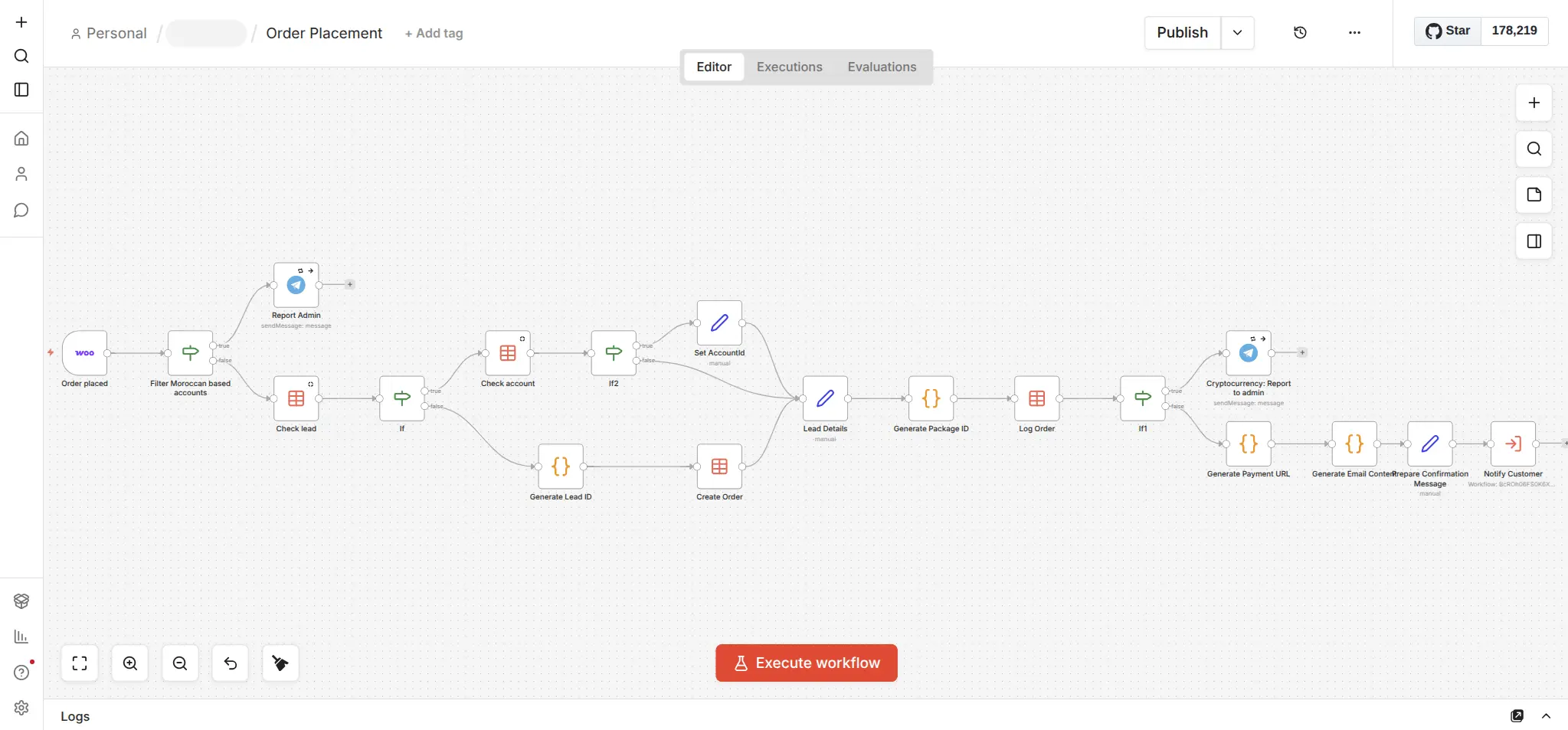

- Order Placement: WooCommerce webhook triggers, filters by geography, checks lead/account existence, generates a package ID, logs the order, generates a payment URL, notifies the customer

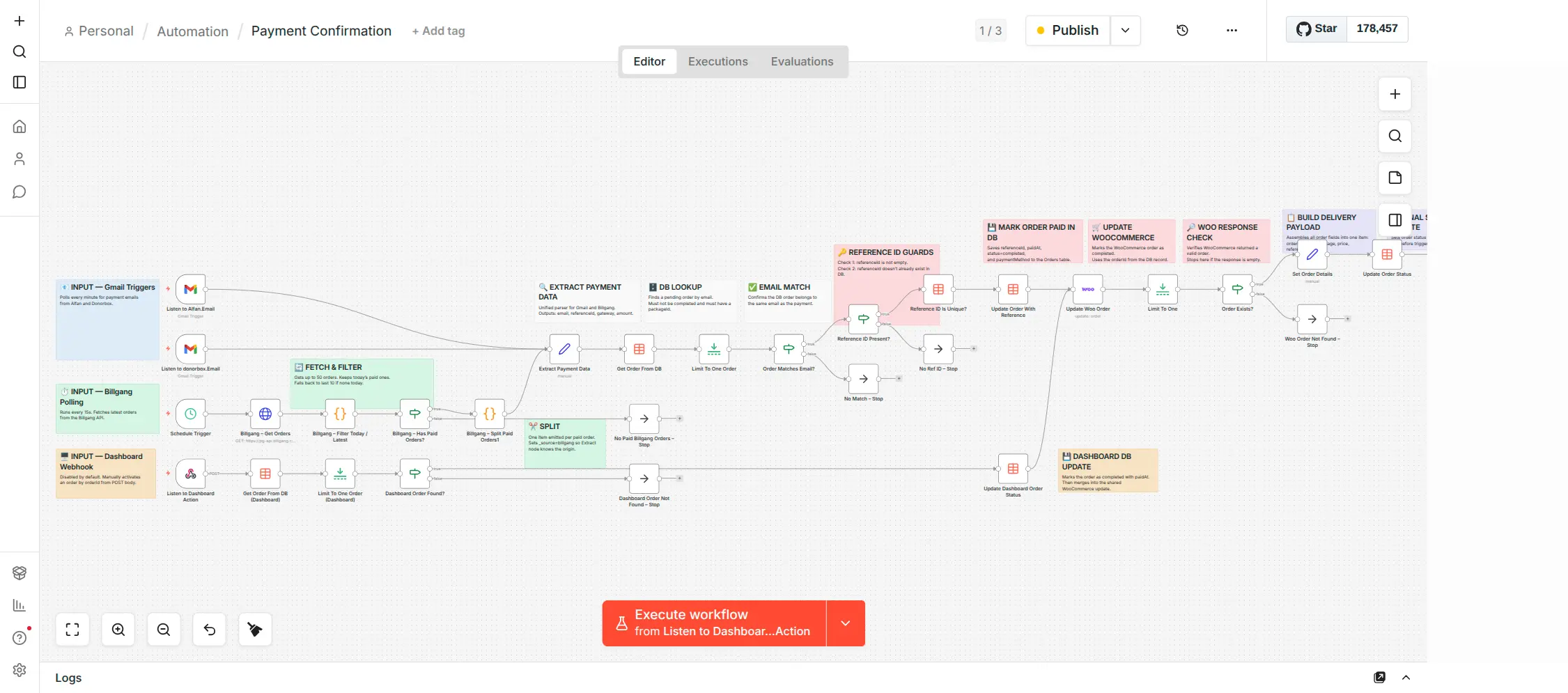

- Payment Confirmed: Listens to multiple payment sources (Gmail from two addresses, dashboard action), reconciles against existing leads and orders, triggers order delivery

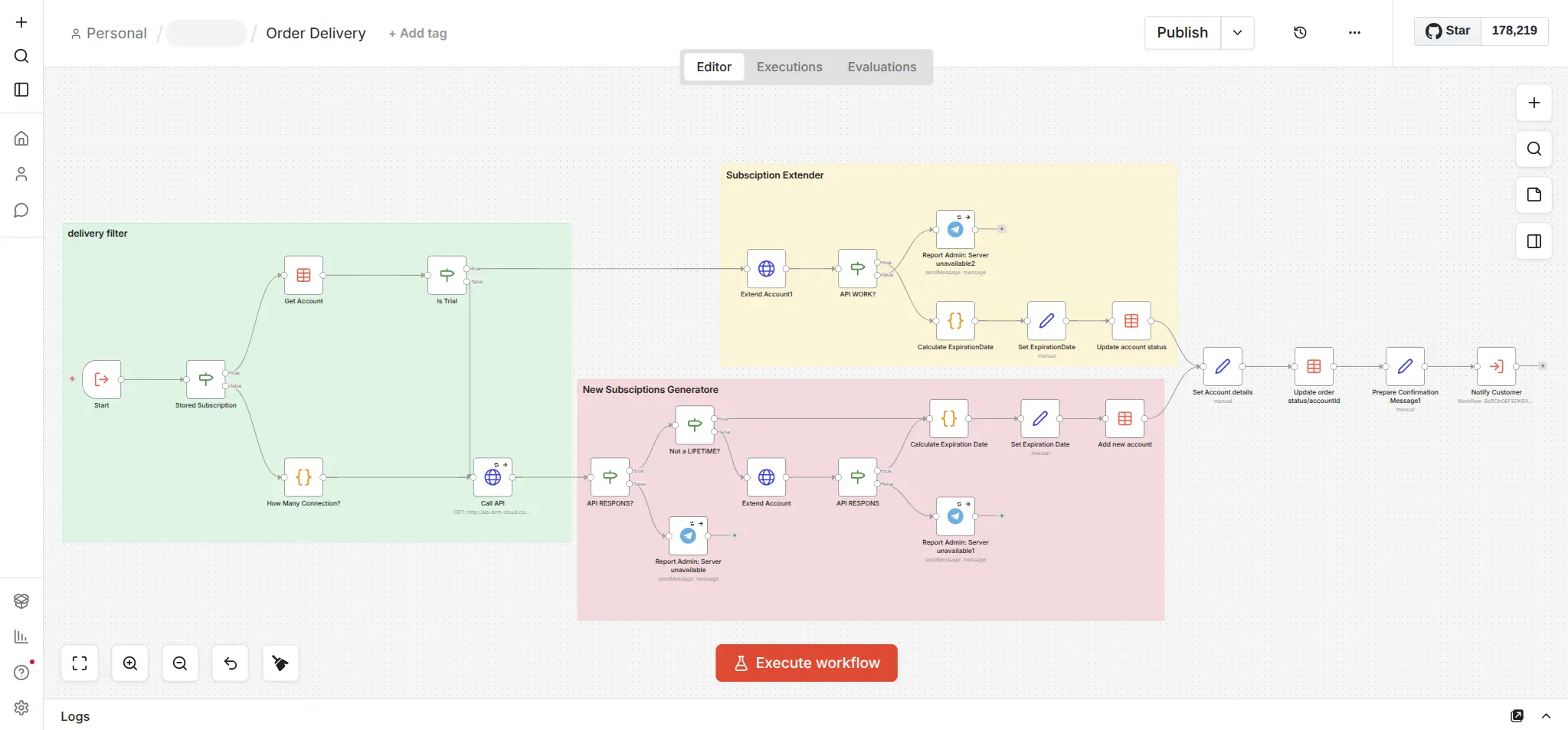

- Order Delivery: The most complex workflow. Handles the actual provisioning logic: checks if it's a trial conversion or new subscription, calls the streaming provider API, handles the "Subscription Extender" path for renewals and "New Subscriptions Generator" path for new accounts, catches API failures and alerts the admin

- Subscription Renewal Reminder: Runs daily, fetches accounts approaching expiration, prepares a reminder message, calls Notify Customer, marks reminder as sent

- Trial Expiry Reminder: Runs hourly (tighter window than subscriptions), same pattern

Shared sub-process:

- Notify Customer: A single sub-workflow called by every other workflow that needs to reach a customer. Handles the channel routing logic: is it a trial message or order message? Send via Telegram or WhatsApp? Which WhatsApp message template applies? Send email. Merge. Persist. One place. Updated once, works everywhere.

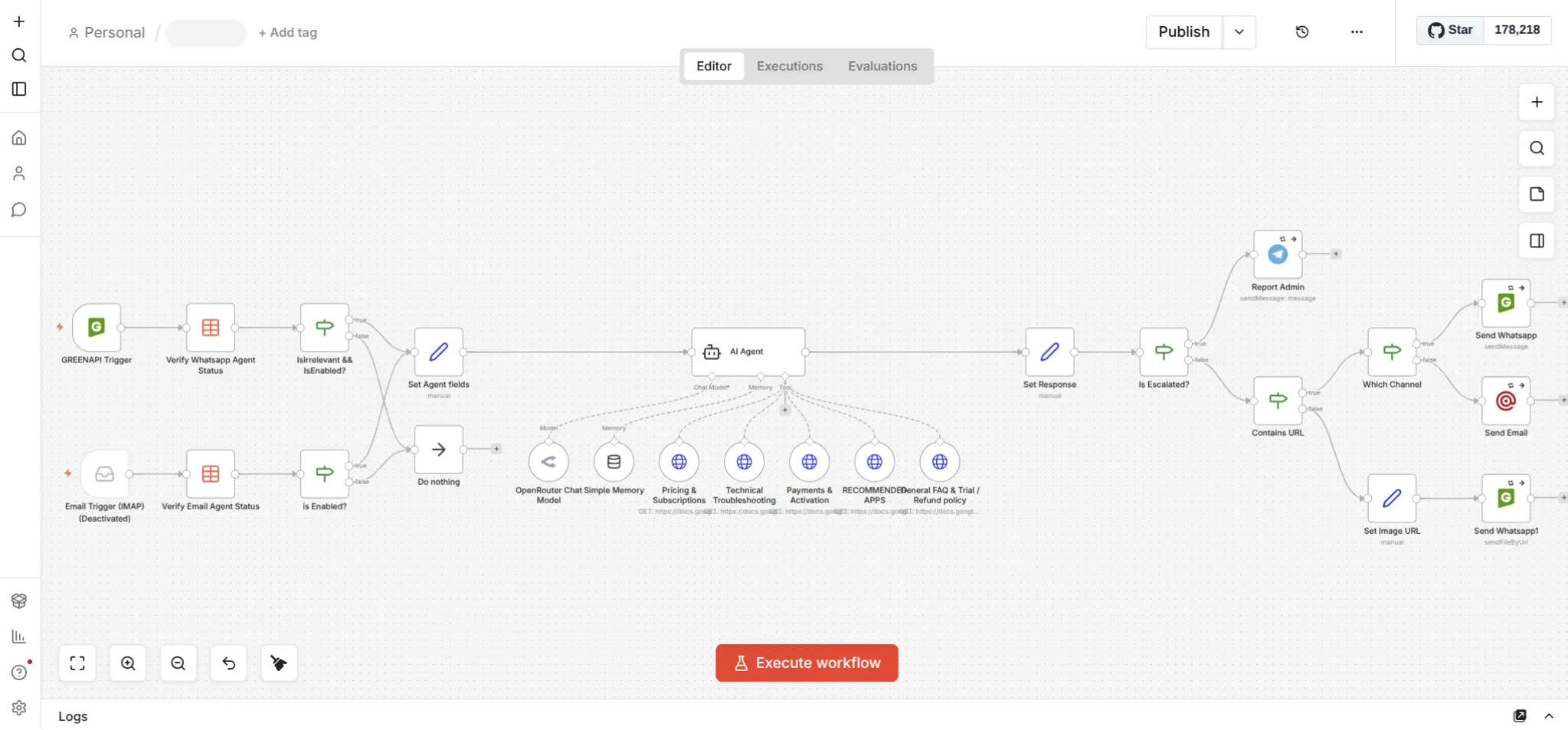

AI Agent:

- AI Agent: WhatsApp trigger via GreenAPI, verifies agent status (enabled/disabled), checks if the message is irrelevant, sets agent context, passes to the AI Agent node with tool access to five Google Docs knowledge bases (Pricing & Subscriptions, Technical Troubleshooting, Payments & Activation, Recommended Apps, General FAQ & Refund Policy), detects escalation, routes response by channel, handles image URLs separately

Error handling:

- Error Logging: Global error trigger catches any workflow failure, passes the error context through an LLM (OpenRouter) to generate a clean human-readable summary, sends it to the admin via Telegram. The admin gets a plain-language explanation of what failed and why, not a raw JSON dump.

Data access layer:

- APIs workflow: A set of n8n webhook-triggered mini-workflows exposing: Get Leads, Get Accounts, Get Orders, Get Messages by Lead ID, Get Agent Statuses, Update Lead. This is what the dashboard talks to. No direct database access from the frontend.

The AI Agent Design

The enable/disable mechanism deserves attention. This wasn't just a feature request, it was the core design constraint.

The client's customer service team works specific hours. Outside those hours, leads and orders were going cold because nobody was responding on WhatsApp. A fully autonomous agent running 24/7 was the wrong answer too, because the team wanted to handle conversations themselves when available.

The solution: the agent checks its own status before doing anything. The "Verify WhatsApp Agent Status" node at the start of the workflow reads from the data table. If the admin has disabled the agent via the dashboard, the workflow exits immediately and the message sits for the human team. If enabled, the agent takes over.

The same toggle controls whether the email trigger (currently deactivated) fires. The architecture supports multiple channels with per-channel enable/disable.

The knowledge base design is also deliberate. Five separate Google Docs, each covering a distinct topic area, all exposed as tools to the AI Agent node. The agent retrieves only what's relevant to the query. This is cleaner than a single giant prompt with all product knowledge stuffed in, and easier to update. When pricing changes, the client updates one Google Doc. The agent picks it up immediately.

Escalation is a separate detection step after the agent generates a response. If the response triggers the escalation condition, it routes to "Report Admin" before sending to the customer. The admin gets a Telegram notification and can step in. This is not the agent deciding to escalate, it's the workflow detecting escalation signals in the output and acting on them. The distinction matters.

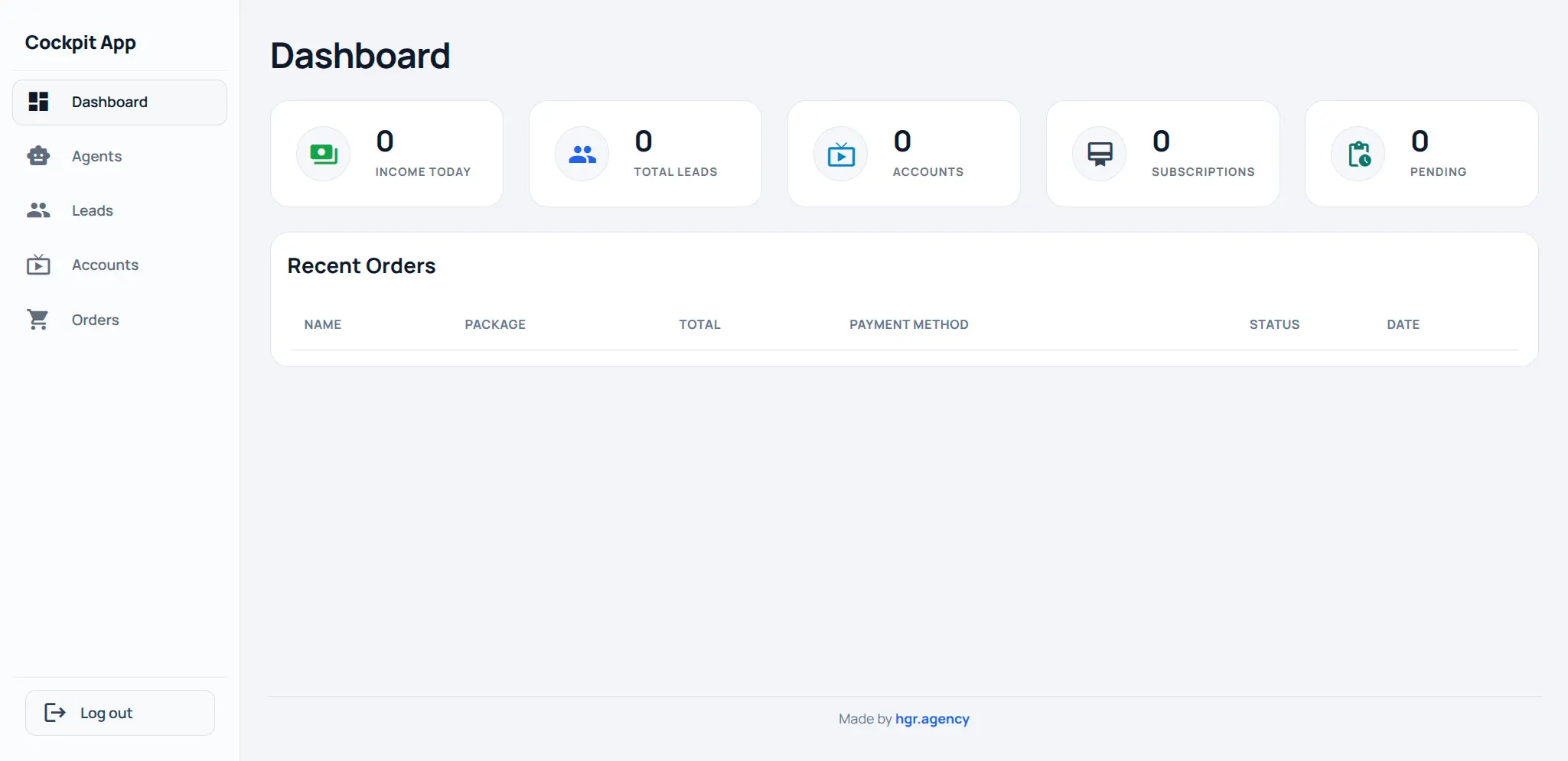

The Dashboard

The admin dashboard gives the client operational control without needing to touch n8n directly.

It shows: income today, total leads, streaming accounts, active subscriptions, pending orders, and a recent orders table with name, package, total, payment method, status, and date. The sidebar gives access to Agents, Leads, Accounts, and Orders as separate views.

Two things make this more than a reporting tool.

First, the agent toggle. The admin can enable or disable the AI agent directly from the dashboard. No workflow editing, no n8n access required. This was a hard requirement: the client's team needed to be able to flip this switch without technical help.

Second, manual subscription activation. Some edge cases require provisioning an account outside the normal order flow, a payment confirmed over the phone, a manual arrangement with a reseller agent. The dashboard exposes this as a direct action, which triggers the "Listen to Dashboard Action" webhook in the Payment Confirmed workflow. The automation runs, the audit trail is maintained.

What the Migration Actually Delivered

Three Make scenarios, eleven n8n workflows with clear separation of concerns.

Inline code execution nodes doing ad-hoc logic, replaced by a proper sub-workflow architecture with a shared Notify Customer process called by every workflow that needs it.

No error visibility, replaced by LLM-summarized error reports delivered to Telegram the moment anything fails.

No AI support coverage, replaced by a WhatsApp/Email agent with tool-calling, memory, escalation detection, and human handoff that the team controls from a dashboard toggle.

No operational visibility, replaced by a custom dashboard showing live business metrics and giving the admin direct control over the system.

The execution limit problem is gone. The agent gap during off-hours is gone. The "I need to touch three workflows to change one notification" problem is gone.

The Honest Assessment

This project is a good example of when migration makes sense and when it doesn't. If the client had three simple, stable workflows and no plans to add AI, staying on Make would have been fine. The per-operation cost alone is not a reason to migrate.

The tipping point was the combination: execution limits starting to bite, workflows becoming hard to extend, and a new AI requirement that Make's architecture couldn't support cleanly. At that point you're not migrating to save money, you're migrating because the current system is about to become a maintenance liability.

The right time to rebuild is before it becomes an emergency. We were close to that line.

Built with n8n, GreenAPI, WooCommerce, Brevo, Telegram, OpenRouter, and e2b. Dashboard built as a standalone web app calling n8n's internal API layer.

All screenshots and workflow details shared with client approval.

Open calendar

Building or maintaining a workflow system?

If you’re dealing with workflow architecture, BPMN tooling, automation design, or the realities of running process systems in production, I help teams think through the hard parts clearly.

No pitch. Just a direct technical conversation.

Let's talk